All around us, we are surrounded by crappy software.

Pension funds that are stumbling along using decades old batch scripts with faulty assumptions.

Credit agencies have leaked over a hundred million social security numbers and other confidential data.

Planes need to be rebooted every 51 days to prevent “potentially catastrophic” bugs.

And not to even mention the tons of buggy and frustrating software that we see all around us, from smaller vendors and older enterprise software.

Such incompetence would never fly in other engineering disciplines. We would never put up with bridges that are as buggy as your average software system. So why is software engineering so bad? Why is there so much crap software out there?

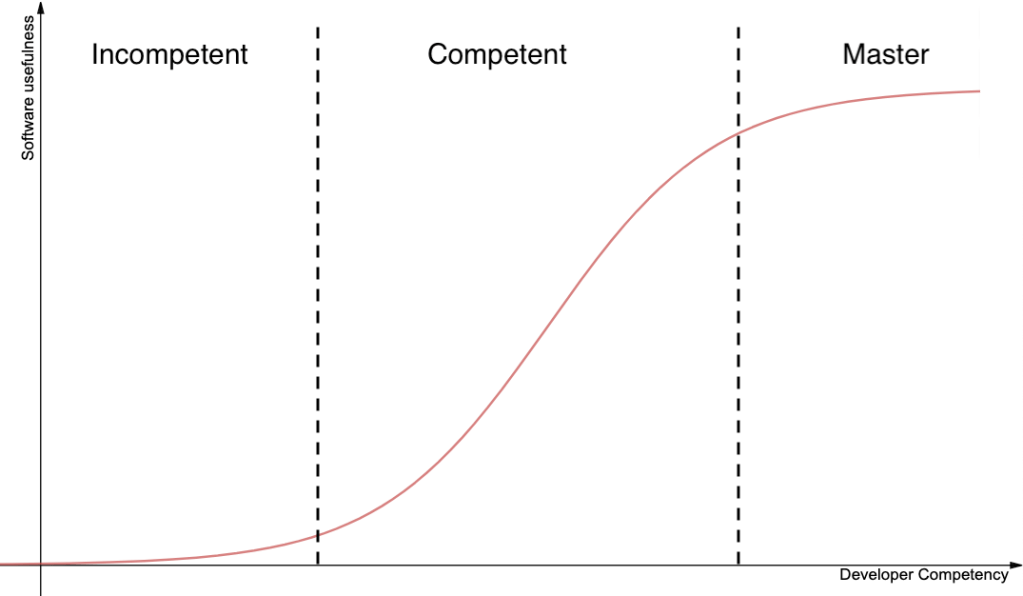

To understand the reason, we need to first understand how developer skill is related to the usefulness of the software they produce for a given task.

In the first bucket, you have the “developers” who are effectively incompetent. You might see a wide range of skill levels within this bucket… but they are all similar in that their end-product is functionally useless. Computers are ruthless in that regard – if you can’t figure out how to make your app compile, you might as well have not written it at all. If you can’t figure out how to build the core functionality of your app, absolutely no one will use it. Below a certain skill threshold, you will not produce any usable software. Most “developers” in this bucket may dabble with coding in their free time, but you will rarely find them producing professional software.

Once you go past this threshold however, things change. At the lower end, the developers are now good enough to produce minimally viable software systems. And as these developers get better and better, the usefulness of their software improves rapidly as well. An app that “worked” but was slow, buggy, insecure, and incomprehensible… slowly starts to become less buggy, more performant, more secure, and easier to understand. Unlike the other two buckets, as the developers in this bucket grow their skills, the software they produce becomes significantly better.

And finally, when developers reach a certain threshold of skill, they cross over into the third bucket. A bucket where everyone has reached such a high level of competency (relative to the problem they are solving), that further personal-growth will make minimal difference to the end product. Ie, any random staff engineer at Google can build a CRUD app that is just as good as Jeff Dean’s.

In an ideal world, the only developers in the first and second buckets would be students or entry-level hires. And all professional software systems would be primarily built by developers in the third bucket. Developers who have mastered all the skills needed to solve the assigned problem, and produce solutions that are pretty darn close to the platonic ideal. In such a heavenly world, all the software that we see around us, would be at a similarly high level of quality … performing exactly as expected, at optimal performance, with no security holes. A world where the general public reacts to all software with joy, not frustration.

However, there are two problems standing between us and this utopia.

First, the number of developers in the 3rd bucket is exceedingly small, relative to the second bucket. Programming is a “easy to learn, hard to master” activity. Millions of people can write a functional script, but very few have mastered the art of software engineering. Further, there is no gatekeeping for the software industry whatsoever – no software equivalent to the American Medical Association for doctors, or the Bar Association for lawyers. Not surprisingly, you have far more people who are of novice and intermediate competency, as opposed to expert competency.

Second, the demand for developers is immense. There are opportunities for software developers to make huge improvements, in almost every single industry. Compared to more niche professions such as astronomy, where opportunities are severely limited, software development is a talent-constrained field. Ie, the main bottleneck is finding talented software developers, not finding useful work for them to do.

Combine both of these problems, and most companies that want to hire expert developers are locked out from doing so. There aren’t enough expert developers available for them to hire, and the ones that do often have unmatchably high offers from FANG companies or hot startups.

And so, every other company does the next best thing. They hire developers from the second bucket. Developers who can broadly be categorized as “good enough”. Their apps have bugs, security vulnerabilities, and they fail to handle high loads. But at least they are able to build something that “works”. Something that is more useful than the status-quo. Something that can be rolled out into production with minimal regulatory scrutiny.

You might be tempted to think that the above is a natural state of the world, across all professions. But that is actually not the case.

There are tons of jobs that require significant training, but fall into the “easy to master” category. Jobs like being a taxi driver, or a bricklayer, or a bartender. Jobs where the average person would certainly flounder, but a large fraction of practitioners have reached the master-competency bucket, and there are severely diminishing returns to further improvements in skill.

And there are also tons of professions where opportunities are so limited, that employers can choose to hire only those with expert competency. For example, pianists. Amateur pianists abound in every family gathering, but you will never find them playing in a concert hall, given that the number of talented pianists far outstrips the number of scheduled concerts.

And finally, you have the jobs that are hard to master, with plentiful opportunities, but regulatory barriers to entry. For example, medical doctors. In a pre-Obamacare world, there were plenty of people who couldn’t afford health insurance, who would have chosen “some” healthcare over no healthcare at all (whether or not that is advisable, is a whole other can of worms). And yet, because of strict regulations, only those showing great competency are allowed to offer medical services. Similarly for many other engineering disciplines that face tremendous regulatory oversight, such as building bridges or skyscrapers or medical devices.

Software development is a curious cross-section of all the above. It is easy to learn, so legions of “good enough” developers exist all around the world. Developers who build software solutions that are somewhat useful, but littered with bugs and security vulnerabilities. And at the same time, it is hard to master, so developers who can avoid the above pitfalls, are much harder to find.

Software development is so opportunity-rich, that most employers find it almost impossible to recruit expert-developers. It is so opportunity-rich, that despite the veritable legions of novice developers worldwide, jobs exist for each and every one of them.

And lastly, software engineering has no gatekeeping whatsoever. Anyone can attend a coding bootcamp, take a few online coding classes, and start selling their services on Upwork the next day. And their work product can be rolled out into production immediately, with no regulatory oversight.

Combine all three, and it is easy to see why there’s so much crappy software out there. Software is eating the world, and so too are its bugs and security holes.

Addendum: As critical as this article may sound, I am not recommending that we gatekeep the software profession, or subject all software systems to regulatory approval. This is meant to be a descriptive essay, not prescriptive.

This essay also focuses solely on the developer side of the equation. Managers and CEOs not giving developers enough time to build polished software, and preferring to rush out something that is “good enough”, is certainly another major reason that will be explored in a different essay.

If driving taxis and bar tending were ‘easy to master’ why are there so many people so hopeless at doing such jobs and always will be? As for construction workers I would hardly describe bricklaying as something that is easy to learn.

LikeLike

> If driving taxis and bar tending were ‘easy to master’ why are there so many people so hopeless at doing such jobs and always will be?

I’m defining “easy to master” as activities where the difference in outcomes between an “expert” practitioner and the average practitioner, is relatively small. This doesn’t mean that everyone can do that job, or that there aren’t people on the lower end who are very bad at that job.

LikeLike

I’m curious: how do you know that?

I never did any construction job in my life but I can easily imagine somebody doing it for 20 years and invested in his (or her) job way better than the newbie.

LikeLike

One good heuristic is to look at market wages. Total compensation for software developers in USA varies from 70k all the way up to 1M. This is indicative of the difference in capabilities, between a Google Principal/Fellow developer, vs the average developer you’ll find at various companies. You don’t see such variety in wages, within a single job, for construction workers.

LikeLike

It is not just the developers who are to blame. I think that the people who are using the software and those who decide and buy the software have vastly different ideas. This is a reason why I really dislike enterprise software. Most of those are really awful and unusable. Just to get the features the people who decides and buy to buy the software instead of another one.

LikeLike

From my point of view there are two other aspects to that topic:

If you compare it to the bridge, software is not seen as a critical asset that could threaten someone’s life. Thus, the value of software development does not have as high perceived values as there is in other examples.

The second aspect is the experience. We do have software development for about 50-70 years but humans have built bridges since thousands of years. The experience how to build them is much higher than building software and especially the influencing/environmental factors are better predictable as they do not change (a lot) during build-phase. How many software projects started with a clear plan and needed to adapt to changes in operating systems, in hardware or other software.

LikeLike

Dear Rajiv,

the cry for more developers is as old as software.

To develop software can be seen as a talent. But that is only a potential that may or may not be brought to its fullest potential in a person. We are humans, we are born prematurely, blind, unable to walk, and helpless. We have to learn erverything. Usain Bolt would not have become a record smashing athlete, if he were not trained continously and helped overcome 2 major injuires.

As an aside, societies have different ways of not finding and training talent.

So, if you want a developer to become a better developer, put that person in a position where they can learn.

Another method is to put that person into a synergetic organisation. Because ultimately, today’s software is bigger than a single head. In production, that’s the Toyota principle.

In software, it is usually fast feedback from extensive testing.

Which brings me to the next problem. What if you had a bunch of great developers?

They are are going to produce crappy software for any number of reasons.

E. g. great developers individually, but uncommunicative. That is, nobody knows how they solve their part of the problem. Which leads to assumptions being made and more crappy software.

This is going on top of all the assumptions between developers, their management and the customer.

Then add the fact that software is worse than radioactivity. You need a device to see if it is there and what it does.

Which will lead people paying for software to ask for changes all the time, esp. if there is a lot of time between ask and delivery.

Then add no time and no funding for fast feedback from extensive testing.

I’m sure, I am missing some reasons, like documentation.

Food for thought: Why the hell did we have a Y2K problem? Like in software used for e. g. life insurances that was written in the 1970/1980?

LikeLike

We had a Y2K problem because using only two digits for the year made sense in the 70s, 80s, and 90s for many applications: it saved space and the problem decades later would belong to someone else.

The essay makes some good points but leaves out the problems with development environments, the widespread idea of testing as an afterthought, and the business environment under which software is developed.

LikeLike